How to Prevent AI Content Theft and Take Control of Your Digital Assets

Every day, AI agents crawl millions of websites. They extract articles, analyze pricing, copy product descriptions, and harvest years of carefully crafted content. Some do this to train the next ChatGPT. Others gather competitive intelligence. A few actually help drive traffic to your site.

The companies behind these bots aren’t asking for permission. OpenAI, Meta, and others have built billion-dollar businesses on content they didn’t create. Music publishers call it willful copyright theft(1). Authors are filing lawsuits. But while the legal battles rage on, your content remains exposed.

You have a choice to make. You can watch as AI companies profit from your work. Or you can take control and decide exactly which AI agents access your content, when they access it, and what they can do with it.

Key takeaways

- Not all AI traffic is harmful. Some agents bring qualified traffic and reduce support costs, while others steal your intellectual property.

- Blanket blocking doesn’t work. You’ll miss valuable opportunities and legitimate automated traffic that helps your business.

- Behavioral analysis beats static rules. AI agents constantly evolve their tactics, requiring dynamic detection methods.

- Different content needs different protection. Your pricing API needs strict controls, while product pages might benefit from AI visibility.

- You can’t protect what you can’t see. Real-time visibility into AI agent behavior is essential for making informed decisions.

- Control requires three elements. You need to be able to detect who’s accessing content, have an understanding of their intent, and be able to enforce your rules appropriately.

What is AI content theft?

AI content theft happens when automated agents scrape your website’s content without permission. They do so to train AI models, build competing services, or resell your data. It’s the digital equivalent of someone photocopying your entire library, then using it to build their own business. Right now, there are two types of scrawlers for AI agents:

- Training bots: These crawlers collect data to train large language models (LLMs). OpenAI, Anthropic, and Meta use them to build their next AI models. They take your content without asking, without paying, and often without attribution.

- Agentic AI: These are task-focused bots that browse websites on behalf of users. They might compare prices, gather research, or extract specific information. Some add value to your business. Others don’t.

Most companies think only search engines crawl their content. That’s no longer true. AI agents now represent a growing percentage of automated traffic on many websites. Consider how Clearview AI continues to scrape over a hundred billion facial images without consent(2). Or how scraped data from workouts posted to fitness Strava app inadvertently exposed military base locations and users’ homes(3).

The pattern is clear: AI companies do not ask for permission to scrape your content. They scrape your content and see if they can get away with it. More often than not, they can. Even if they are sued, it takes years to settle these lawsuits. The bottom line is that companies need to take matters into their own hands if they want to protect themselves appropriately against AI content theft.

Why blanket blocking doesn’t work

Simply blocking all AI agents isn’t the right solution, because not all AI bots are the same. Some AI agents can actually help your business. For example, shopping agents may help connect buyers with your products, LLM scrapers can help you show up in LLM search results, and customer service bots can help reduce support tickets by finding answers on your website.

However, other AI bots are actively harmful. For example, content scrapers can steal your intellectual property for AI training purposes, competitor scrapers can monitor your pricing and inventory, and malicious AI agents can overload your API and slow down your website.

Major Swiss retailer Coop experienced this firsthand when they discovered that scraping bots were overloading the Google API they relied on for some of their website features. These scrapers were costing the company between $5,000 and $10,000 a month in extra operational costs.

Coop worked together with DataDome to remove all the bad bots, roughly 25% of their traffic, while keeping the helpful bots. As a result, Coop’s page load times improved, their SEO rankings went up, and legitimate customers had a better experience.

How to maintain control over your digital assets

To combat AI bots, you need a framework that adapts as fast as they do. This means building three core capabilities: visibility into who’s accessing your content, policies that define acceptable use, and enforcement that happens automatically at the right level.

Modern AI agents don’t announce themselves clearly. They use rotating IP addresses, human-like browsing patterns, and distributed crawling from multiple locations. Traditional security tools like a WAF often miss them completely. You need a solution with behavioral analysis that spots AI agents by how they act, not just what they claim to be.

Once you identify an AI agent, you need to understand its purpose. Training crawlers leave specific fingerprints: They systematically access large volumes of content, ignore robots.txt directives, and extract text without engaging with interactive elements. Legitimate agents behave differently. They follow specific user paths, respect rate limits, and interact with your site like humans would.

This understanding lets you apply the right controls to the right content. Your high-value content (proprietary research, premium articles, unique datasets) needs strict protection from unauthorized AI training. Block all unapproved access here. But your public content might benefit from AI visibility. Product descriptions could reach more customers through shopping agents. Support documentation might reduce tickets when AI assistants can find answers.

Don’t forget your mobile apps or API endpoints either. As Coop discovered, bots exploiting features like “find in stock” or store locators through an API can generate massive unexpected costs. All endpoints need protection, and each type of content needs its own protection strategy. One-size-fits-all doesn’t work when you’re dealing with sophisticated AI agents that adapt their tactics daily.

Your action plan to protect against AI content theft

The difference between companies that successfully protect their digital assets and those that don’t comes down to taking concrete action. This is your roadmap:

Audit all automated access

You can’t fix what you don’t measure. Most companies don’t know how much of their traffic is automated, let alone which bots are doing what. Before you can protect your content, you need a clear picture of your current situation.

- Identify what percentage of your traffic is automated

- Determine which bots access which content

- Calculate the cost of unauthorized scraping (server resources, API calls, lost IP value)

Define acceptable AI usage policies

Once you know who’s accessing your content, you need to decide whether they should be allowed to. This is a business decision. Which AI agents help your business grow? Which ones steal value? Your policies should reflect your business goals, not just your security concerns.

Allowed AI uses might include:

- Search engines crawling for SEO

- Approved research tools that cite sources

- Partner integrations that add value

Blocked uses should include:

- Unauthorized training data collection

- Competitive intelligence gathering

- Content theft without attribution

Deploy tools that can dynamically classify and control

Policies without enforcement are just suggestions. And static rules won’t keep up with AI agents that change tactics daily. You need protection that learns and adapts as fast as the threats do. You need protection that:

- Adapts in real-time: machine learning models that recognize new bot patterns as they emerge

- Provides granular control: different rules for different pages, APIs, and user agents

- Maintains performance: protection that doesn’t slow down legitimate users

- Offers clear visibility: dashboards that show exactly what’s happening on your site

How DataDome protects your digital assets

DataDome provides intelligent, automated protection that removes bad traffic from your digital assets while preserving good traffic. With DataDome, you will have:

- Sub-50ms detection with zero friction. Our multi-layered AI analyzes every request’s intent immediately. Real users never notice we’re there. Bots never make it through.

- Turn scrapers into revenue. Why just block AI crawlers when you can charge them? Set up paywalls for any AI provider through our dashboard. Your content becomes a product, not a target.

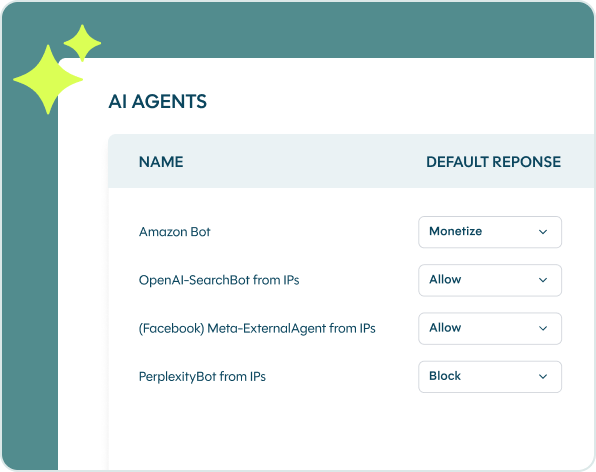

- See every AI agent in real time. Know exactly which LLM crawlers and agentic AI providers are hitting your site. Set individual policies: allow, block, or monetize.

- Auto-scale to 200x traffic spikes. 30+ global points of presence handle massive bot attacks without breaking a sweat. Your site stays fast no matter what hits it.

- SOC team watching 24/7. Expert-supervised AI models adapt to new threats automatically. You sleep. We don’t.

- Industry-leading accuracy. False positives kill conversions. That’s why we obsess over accuracy. Your legitimate users sail through while bots hit a wall.

DataDome puts you back in control with intelligent automation that knows the difference between a customer and a crawler. Protect your digital assets from AI scrapers today.

References

-

- https://www.france24.com/en/live-news/20250917-top-music-body-says-ai-firms-guilty-of-wilful-copyright-theft

- https://techhq.com/news/clearview-ai-no-more-controversial-facial-rec-tool-for-us-private-companies/

- https://www.theguardian.com/world/2018/jan/28/fitness-tracking-app-gives-away-location-of-secret-us-army-bases