How to Stop Bot Traffic: Detection, Prevention, & Protection

Bots now generate more than half of all internet traffic. Some of that traffic is helpful, such as search engine crawlers, uptime monitors, and payment verification services. But a growing share is malicious, and it is accelerating fast. Across DataDome’s global customer base, AI bot and crawler traffic quadrupled in the first eight months of 2025 alone.

Bad bots scrape your content, stuff stolen credentials into login pages, hoard inventory, and launch attacks that can take your site offline. Stopping them requires more than basic tools, and most businesses are not prepared. When DataDome tested nearly 17,000 websites, over 61% were not protected against simple bot attacks. Only 2.8% were fully protected.

This guide focuses on what actually works to stop bot traffic: Practical methods, their limitations, and why a dedicated bot management solution is the most effective defense.

Key takeaways

- Simple fixes are not enough. CAPTCHAs, WAFs, rate limiting, and robots.txt each have significant limitations. Sophisticated bots bypass them routinely.

- You need to stop bots, not just filter them from reports. GA4 filtering cleans your analytics data, but those bots are still reaching your servers and consuming resources.

- The business cost is real. Bot traffic enables credential stuffing, scraping, scalping, and fraud, while skewing every metric you rely on.

- AI-powered bot management is the most effective defense. Real-time behavioral analysis across hundreds of signals catches what static rules cannot.

What is bot traffic?

Bot traffic is any website or app traffic generated by automated software programs (bots) rather than human visitors. There are different types of bot traffic, including good and bad bots, the key difference between human and bot traffic is that bots are automated and can perform actions at scale, often without direct human oversight.

Good bots perform useful tasks. Search engine crawlers like Googlebot and Bingbot index your pages so they appear in search results. Analytics bots and other bots also play important roles in website monitoring and data collection, helping you understand user engagement and site performance. Uptime monitoring tools check your site’s availability. Payment processors verify transactions. These bots generally identify themselves honestly and follow your robots.txt directives.

Bad bots are designed to exploit. They scrape pricing data and proprietary content, test stolen login credentials at scale, buy out limited inventory faster than any human could, and flood your servers with junk traffic.

Bots can imitate real users, artificially inflating website traffic numbers and interacting with forms and buttons, which can impact ROI metrics.

How to identify bot traffic on your site

Before you can stop bots, you need to identify them. While sophisticated bots work hard to blend in, they still leave patterns in your server logs and analytics. To effectively stop bot traffic, it’s crucial to detect bot traffic by analyzing bot requests and monitoring for unusual spikes in activity that may indicate automated or malicious behavior.

5 warning signs of malicious bot traffic

Bad bot traffic and malicious traffic can be identified by these warning signs:

- Unusually high bounce rates or zero-second sessions. Bots often land on a single page and leave immediately. If you see a surge of sessions with 0-second engagement times, especially concentrated on specific pages like login or checkout, that traffic is likely automated.

- Traffic spikes from unexpected geographic locations. A sudden flood of visits from countries where you have no customers or marketing campaigns running is a strong signal. Bad bots often originate from data centers or regions that don’t match your audience profile.

- Abnormal traffic patterns at odd hours. Human traffic follows predictable daily cycles. Bot traffic does not. If your server logs show sustained high-volume requests at 3am local time when your audience is asleep, investigate further.

- Surges in failed login attempts. A sharp increase in failed logins (especially across many different accounts in a short window) points to credential stuffing. This is when bots systematically test stolen username-password pairs harvested from data breaches on other sites.

- Repetitive requests to the same endpoints. Bots targeting your pricing pages, API endpoints, or product catalog at high frequency often indicate scraping activity. Content scraping is often performed by scraper bots, which extract batches of content from a resource without consent and may reuse it elsewhere. Check your server logs for IP addresses or user agents making hundreds or thousands of requests to the same URL patterns.

How to stop bot traffic: 6 methods and their limitations

There is no single fix that stops all bot traffic. Most of the methods below have strengths and limitations. Here is what works and where these methods fall short.

Rate limiting and IP-based blocking

Rate limiting restricts how many requests a single IP address can make in a given time frame. By restricting the number of requests a single IP address can make within a specific time frame, rate limiting is effective against scraping and brute force attacks, where bots attempt large-scale login attempts or overwhelm web resources. It is one of the simplest defenses and can slow down basic bots that hammer your server from a single source.

Where it falls short: Modern bots rotate through thousands of IP addresses (including residential proxies), so they rarely trip rate limits tied to individual IPs. Rate limiting is a useful baseline, but on its own, it stops only the least sophisticated attacks.

Web application firewalls (WAFs)

A WAF filters incoming traffic based on predefined rules and signatures. It can block known malicious IPs, suspicious user agents, and common attack patterns. WAFs are often effective at stopping injection attacks and known exploits.

Where it falls short: Advanced bots mimic legitimate browser behavior and craft requests that pass WAF rule sets. WAF rules are static. Bots evolve. If an attacker’s requests look normal to the WAF, the traffic sails through unchallenged. WAFs handle application-layer security well, but they were not built for bot management.

CAPTCHAs

CAPTCHAs challenge users to prove they are human. CAPTCHA and reCAPTCHA are common tools to distinguish automated algorithms from people. CAPTCHA challenges, such as Google reCAPTCHA, are implemented on sensitive areas to differentiate human users from automated bots. They remain widely used, but their effectiveness has declined significantly.

Where it falls short: AI-powered CAPTCHA solvers can defeat image-based challenges in seconds. CAPTCHA farms, where low-paid workers solve challenges on behalf of bots, remain cheap and readily available. Meanwhile, CAPTCHAs create friction for legitimate users, hurt conversion rates, and pose accessibility issues. They are a speed bump, not a barrier.

Robots.txt

Your robots.txt file tells well-behaved bots which parts of your site they should and should not crawl. Legitimate search engine crawlers respect these directives. DataDome’s research found that 88.9% of robots.txt files explicitly disallow GPTBot, the most-referenced AI crawler.

Where it falls short: Malicious bots ignore robots.txt entirely. It has no enforcement mechanism. Think of it as a polite request, not a lock on the door.

Honeypots

Honeypots are hidden links or form fields invisible to human users but visible to bots that parse your page’s HTML code. When a bot interacts with a honeypot element, you can flag and block that traffic.

Where it falls short: Honeypots catch unsophisticated bots that blindly crawl and fill every field on a page. They are easy to implement but ineffective against bots that render pages like a real browser and avoid invisible elements.

AI-powered bot management: the most effective approach

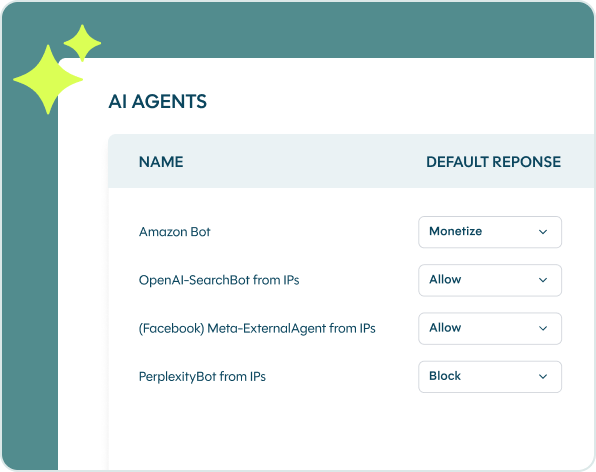

DataDome Bot Protect analyzes every incoming request across 250+ signals: Device fingerprints, behavioral patterns, IP reputation, session dynamics, and more. The engine processes 5 trillion signals daily and makes blocking decisions in under 2 milliseconds, powered by 85,000+ customer-specific models that continuously learn and adapt.

Rather than relying on static rules, DataDome detects anomalies in real time. When a threat is identified, the platform can block or verify the suspicious request—while allowing legitimate users and verified good bots through without friction.

Before DataDome, Displays2go spent 75 to 100 hours over 18 months dealing with bot traffic, including reports, segmentation, discussions with suppliers, and internal meetings. After adding DataDome Bot Protect to their tech stack, Displays2go completely eradicated their bot traffic, resulting in cleaner and more reliable analytics data.

How to filter bot traffic in Google Analytics (GA4)

Filtering bots from your analytics is important for clean data. Analytics bots play a key role in website monitoring, but distinguishing between good bots (like analytics bots) and bad bots is essential for accurate analytics data. But it is critical to understand that filtering bots from reports does not stop them from hitting your site. Think of it as removing noise from your dashboard, not removing the source of the noise.

GA4 automatically excludes traffic from known bots and spiders using a combination of Google’s own research and the IAB/ABC International Spiders & Bots List.[2] Unlike Universal Analytics, you cannot disable this setting, and you cannot see how much traffic it excludes.

Create IP-based filters for known bad traffic

If you have identified specific IP addresses or ranges associated with bot activity in your server logs:

- Go to Admin → Data Streams → select your web data stream

- Click Configure tag settings → click Show all

- Click Define internal traffic

- Add the offending IP addresses with a descriptive label (e.g., “bot traffic – data center IPs”)

- Activate the corresponding data filter under Data Settings → Data Filters

Use explorations and segments

For more granular analysis, build custom explorations in GA4 that segment out suspicious traffic patterns: Zero-second sessions, traffic from untargeted countries, or sessions with abnormally high event counts but no meaningful engagement.

Why GA4 filtering is not enough

GA4 filtering solves a reporting problem, not a security problem. Even after you clean your analytics data, those bots are still hitting your site, consuming server resources, and potentially executing attacks.

GA4’s built-in bot exclusion only catches bots on the IAB’s known list. Sophisticated bots that rotate IPs, spoof user agents, and mimic human behavior patterns slip right through. Manual IP filtering is reactive and labor-intensive. You are always one step behind.

The bottom line: clean analytics data helps you make better marketing decisions. But to actually stop bots, you need to block them before they reach your servers and your analytics.

When DataDome went live for industrial supply retailer Zoro, Google Analytics traffic dropped 25–30% overnight—revealing just how much bot traffic had been inflating their metrics all along.

What is the business impact if you do not stop bot traffic?

Skewed analytics and wasted ad spend

When bots make up a significant share of your traffic, every metric you rely on becomes unreliable. Pageviews, bounce rates, session durations, conversion rates, and geographic distribution all get distorted.

The downstream consequences are serious. A/B tests produce meaningless results when bots pollute the sample. Marketing campaigns appear to perform differently than they actually do. Attribution models break down. For businesses spending significant amounts on digital advertising, bot-inflated metrics lead to wasted ad spend and poor optimization decisions.

Server performance and infrastructure costs

Every bot request consumes server resources: CPU, memory, and bandwidth. High-volume bot traffic can degrade your site’s performance for real customers, increasing page load times and hurting user experience.

In extreme cases, bot-driven traffic spikes can cause outages. DataDome reduced SNCF Connect’s infrastructure costs by 10% simply by blocking scraping traffic that was straining their servers.

Bot management: Putting it all together

What is bot management?

Bot management is the practice of detecting, classifying, and responding to automated traffic in real time. The goal is not to block all bots. That would break search engine indexing and other essential services. The goal is to allow good bots, stop bad bots, and manage the gray area in between.

An effective bot management solution does four things:

- Detects bots by analyzing behavioral signals, device fingerprints, IP reputation, and request patterns. It goes far beyond simple user agent checks or IP lists.

- Classifies traffic as human, good bot, or bad bot with high accuracy and minimal false positives.

- Responds with the right action for each category: allow, block, rate-limit, or verify.

- Adapts continuously as bot operators change their tactics.

Every method we covered in this guide (rate limiting, WAFs, CAPTCHAs, robots.txt, honeypots) addresses one piece of the problem. Each has gaps that sophisticated bots exploit. GA4 filtering helps you see the damage after the fact, but it does nothing to prevent it.

DataDome Bot Protect takes a fundamentally different approach. Instead of relying on static rules or known-bad lists, it analyzes every incoming request in real time across 250+ signals: device fingerprints, behavioral patterns, IP reputation, session dynamics, and more. The engine processes 5 trillion signals daily, makes blocking decisions in under 2 milliseconds, and runs 85,000+ customer-specific models that continuously learn as bot tactics evolve.

If you’d like to learn more, book a demo today.

FAQs

The most effective approach is a dedicated bot management solution that analyzes traffic in real time using behavioral signals and machine learning. Basic measures like rate limiting, CAPTCHAs, and WAF rules reduce some bot traffic, but they will not stop sophisticated bots that rotate IPs, mimic human behavior, and solve challenges automatically. A solution like DataDome detects and blocks malicious bots at the edge before they reach your servers, while allowing legitimate traffic through.

Ticket bots use speed and automation to snap up inventory the instant it becomes available. Stopping them requires real-time detection that can distinguish between a human buyer and an automated script within milliseconds, before the transaction completes. An AI-powered bot management solution that analyzes device fingerprints, behavioral patterns, and session dynamics in real time is the most reliable defense.

Bots exist because automation is valuable. On the legitimate side, search engines use bots to index the web, monitoring services use bots to track uptime, and businesses use bots to aggregate data. On the malicious side, bots enable attacks at a scale and speed that humans cannot match, testing millions of stolen credentials, scraping thousands of product pages, or flooding a checkout page with fraudulent orders. As AI tools have become more accessible, the cost and technical skill required to deploy bots have dropped, contributing to the growth in bot traffic.

No. A robots.txt file is a set of instructions that tells bots which parts of your site they should and should not visit. Legitimate bots like Googlebot follow these directives. Malicious bots ignore them entirely. DataDome’s research found that 88.9% of robots.txt files explicitly disallow GPTBot. Robots.txt is useful for managing how search engines crawl your site, but it provides no actual security or enforcement.

References

- FBI Internet Crime Complaint Center, 2024 Annual Report — fbi.gov

- Google Analytics Help: Known bot-traffic exclusion — support.google.com

- 2025 Verizon Data Breach Investigations Report — verizon.com