How to Prevent & Protect Your Website Against Content Theft

What is content theft?

Content theft is the act of stealing another person’s or company’s published content (especially from websites and apps—whether they specialize in e-commerce, editorial content, classified ads, etc.), typically to repurpose and generate traffic (and possibly advertising revenue) for the thief’s own benefit. The easiest way to copy large volumes of online content is to use scraper bots, sometimes known as web crawlers (automated software that scans your site at frequent intervals to copy the content the bot operator wants.

Ever ask yourself, “Are other people earning good money off my business’ content?”

That’s a smart question.

High-quality content generates traffic, customer loyalty and sales. Whether it’s editorial content, product catalogs, or classified ads, it often forms the basis for a large part of the revenue you generate online.

But creating quality content is hard work, and it’s costly. Stealing content, on the other hand, is very easy. Given the chance, unscrupulous actors will simply copy what others have created and exploit it for their own benefit. Ethically and legally, the practice is more than questionable, but it happens everywhere, all the time.

Is your online business a victim of content theft? If it is, how exactly does it harm you? And most importantly, how can you protect website content from being copied in the future?

What industries are most targeted for content theft?

If your content is valuable, more likely than not, someone will want to use it for their own benefit.

Media sites have struggled with content theft since the dawn of the internet. Content aggregators scrape and repurpose media content in order to generate traffic and advertising revenue for themselves, without doing any of the writing and editorial work. Media monitoring service providers also scrape editorial content in order to use it in the tools and reports they sell, typically without any retribution to authors or publishers.

On e-commerce and classified ad sites, content thieves often target product descriptions, prices, and customer reviews. The motivation is obvious: for a competing site, copying your content is a whole lot easier than doing the painstaking work of building their own.

For content theft to be truly profitable, however, it must be done at scale. And the easiest way to efficiently copy large volumes of online content is to use scraper bots, also known as web crawlers: automated software that will scan your site at frequent intervals and copy the content they are interested in.

The most motivated scraper bot operators will go to great lengths to disguise their bots as human users. They are therefore difficult to detect, and website administrators often have no idea how much automated traffic they really have.

Rakuten France is a leading e-commerce site, which publishes 600,000 new ads every day. When the DataDome bot management technology was installed on the site, it revealed that an astonishing 75% of the site’s traffic was generated by bots. The far most active category of bots was web scrapers, which were copying the rich product and customer data available on the site.

How do you know if your content is stolen?

If you suspect that your online content is being stolen, there are multiple tools and techniques you can use to find out if your content is indeed being republished without your permission.

For example, you can add an extract of your content (choose something that will be unique) to Google Alerts. Google will automatically send you a notification if an identical extract is published somewhere else. The service is free.

Copyscape is another option, which has been created specifically for this purpose. Its Copysentry service automatically monitors the web for copies of your content, and sends you an email alert as soon as they appear. Other duplicate content detection services include plagiarism tools like Unicheck or Plagiarism Checker, as well as image search and recognition tools like Tineye.

However, all these tools have important limitations:

- If you have a lot of content, the implementation effort can be prohibitive

- They may work well for editorial content, but they will not help you identify scraping and theft of dynamic content like prices

- Most importantly, identifying content theft is only the first step—none of these tools will help you prevent scrapers from stealing your online content.

What to Do if Your Content Has Been Stolen

If you’ve confirmed your online content was stolen and used on another website, ultimately it’s up to you to get it taken down. Here are a few steps you can take.

1. Contact the website owner.

While this step may seem futile—after all, the owner likely knew they were stealing your content—it’s important to start here. Whether there’s a contact form on the site, or you use a tool like Hunter.io to look up the email address associated with the domain, begin by asking nicely. When crafting your message, don’t forget the following:

- Be clear about the content you want taken down.

- Provide the webmaster a deadline to respond to your message.

- Inform the webmaster of the next steps you will take if they don’t respond and comply.

- Request they cease any future scraping activity on your website.

Even if this step fails, it will be useful to have proof that you attempted direct contact.

2. File a DMCA takedown request directly with the website host.

First things first, use a tool like ICANN to look up which company hosts the website that stole your content. This should give you simple contact information for the company so you know where to send your DMCA takedown notice. When writing your notice, be sure to include:

- Your name/company name and contact information.

- A description of the copied material.

- The URL of your original material and the URL of the copied material.

- Your request, such as the immediate removal of the copied material.

Note that DMCA takedown requests are meant primarily for websites hosted in the US as well. If the infringing website is hosted elsewhere, you will likely need to use the next step.

3. Contact Google or the search engine directly.

While this option does not remove the stolen content from the offending website, it can remove it from Google (or other search engine) results—meaning only your website’s content will be visible. If a single website contains multiple pages of content copied from you, meaning you will need to repeat the process for every page.

For Google, you can utilize this form to request the removal of copied content from search results.

How bad is your content theft problem? Find out today.

Do you suspect that your online content is being copied, but lack the data to prove it? If you need content theft protection, start a 30-day free trial of the DataDome bot protection solution, and discover exactly what you’re up against. It takes only a few minutes to set up on your own, and you don’t need a credit card.

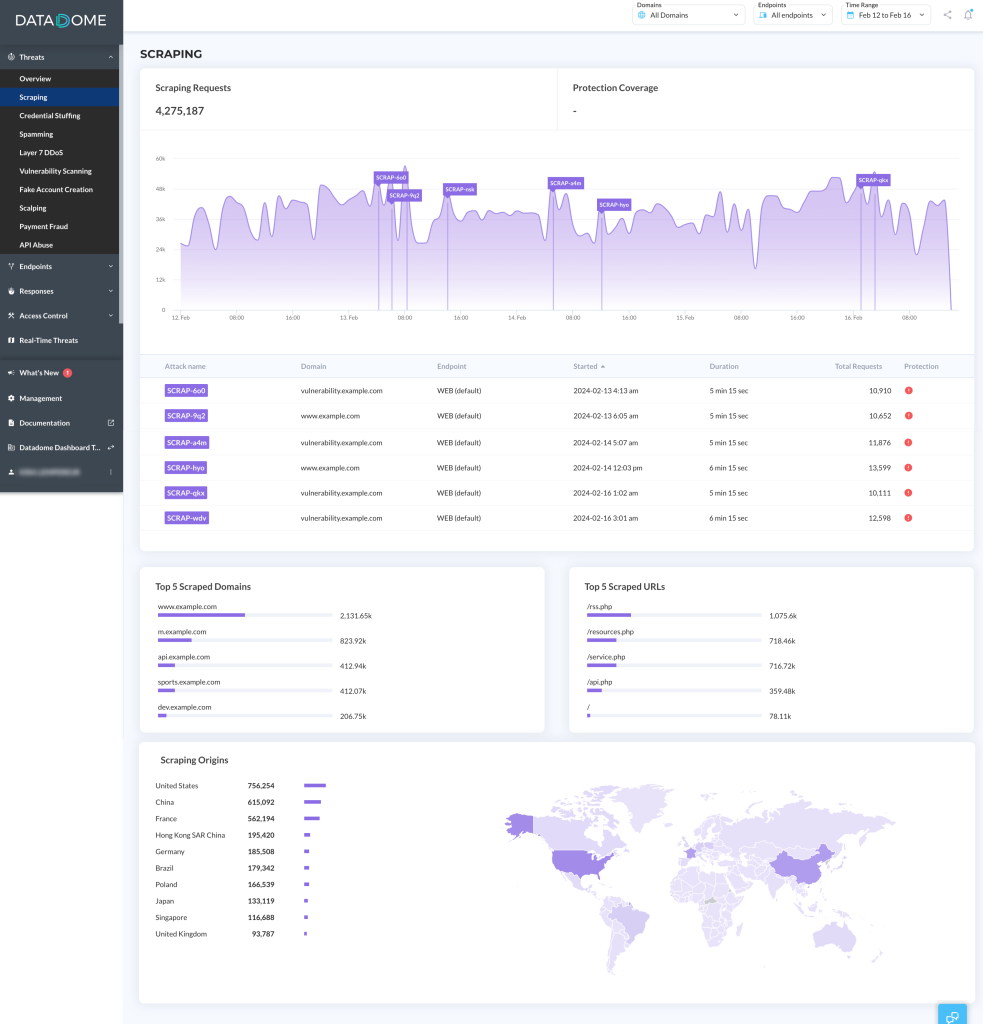

Once you have activated the free trial, the DataDome dashboard will show all bot traffic to your website in real time. You can see how your traffic compares to an industry benchmark, and find detailed information about all the different types of bots that come to your website, including scraper bots—aka content thieves.

The DataDome web scraping dashboard displays a timeline of scraping requests, what pages attackers are trying to steal, and where they’re coming from—so you can stop content theft in its tracks.

How to protect website content from being stolen?

Content theft can be infuriating, but keep your cool: there are ways to protect your online content and make sure your hard labor doesn’t benefit your competitors.

1. Update Your robots.txt File

In theory, you can use your robots.txt file to tell scraper bots to go away. A respectful bot should be programmed to look for that file, read it, and follow its rules before it does anything else.

Realistically, however, it’s not very effective. Most bot operators will happily ignore your instructions. By all means, do optimize your robots.txt file, but don’t count on it to stop content theft for good.

2. Protect Your Online Content With Website Terms & Conditions

You can use your website terms and conditions to prohibit any scraping and possible stealing of content from your site, even content that can’t be copyrighted such as prices and customer reviews.

If you want to try to protect your online content with this method, you can download a template here.

Enforcing your terms and conditions may require significant time and perseverance, however. Just like optimizing your robots.txt file, updating your terms and conditions is a useful, but probably not sufficient measure.

3. Protect Your Images With a Watermark

While not infallible, a watermark can help ensure any images stolen from your website are clearly attributed to you. Make sure the watermark cannot be easily cropped out of the image.

4. Disable Easy Ways to Copy Your Content

There are a host of tools scrapers use to gather your content, such as right-click menus to save images and copy+paste functionality. Many plugins and tools are available to disable these easy accesses. You should also consider ensuring your RSS feed only posts summaries of content, not the full text.

However, be warned: the more access controls you remove, the more difficult your website will be to access for all users.

5. Prevent Content Theft With Anti-Bot Technology

To stop content theft once and for all, you need an effective technical solution: web scraping protection software. Ideally, the solution should identify and block any unwanted web scrapers or ticket scalpers without your intervention, so that you focus on developing and monetizing more online content.

This is exactly what DataDome’s bot management solution provides. Using a sophisticated bot detection engine, built on artificial intelligence and machine learning, it protects web applications, mobile apps, and APIs from malicious scraper bots.

The Risks & Consequences of Content Theft

Most online business owners are aware of web scraping and content theft, but few measure the full impact that this activity can have on their business. Here are some of the most dire potential risks and consequences of content theft.

Unfair Competition

Competitors who scrape your product listings and prices can display the same offer as you with very little effort, and make sure to always keep their prices lower than your own.

KuantoKusta, Portugal’s leading price comparison site, discovered that certain merchants dropped their prices almost instantly whenever a competitor changed theirs. It was unfair to the merchants who played by the rules, and also jeopardized KuantoKusta’s own paid price comparison service.

Some online businesses invest a lot of time and money in keeping extensive online product catalogs up to date, only to see competitors piggybacking off their efforts by stealing their content.

Rubix and SGDB France are both industrial distributors with catalogs of millions of references, which they continuously enrich and update. Offering complete product data for every reference is a major competitive advantage, so it will come as no surprise that both distributors were plagued by aggressive content scraping before they installed an efficient bot protection solution and content theft protection. This intellectual property theft represented a real business risk for the companies.

Duplicate Content & Lower SEO Rankings

If your content is stolen by scraper bots and republished on other websites without your consent, it may harm your SEO rankings.

Duplicate content still represents a challenge for search engines—and by extension, for content creators.

When there are multiple versions on the internet of “appreciably similar” content, as Google calls it, search engines have to decide which version to rank for query results. Since they generally prefer not to list multiple versions of the same content, they must choose one. And although Google is relatively good at identifying the original source, they are not always perfect.

Other sites also have to choose between the duplicates. So instead of all the relevant inbound links pointing to your content, the link equity (an important ranking factor) can be spread among multiple websites, diluting search visibility for each.

Simply put, if your content is systematically scraped and stolen, your content creation efforts may just serve to rank someone else’s site ahead of your own. The end result, of course, is loss of traffic and revenue. For a media site, any loss of traffic and engagement translates to lower subscription and advertising revenues. For an e-commerce site, every visitor lost to a competing site is a lost sales opportunity.

Poor Website Performance & Denial of Service (DoS)

To copy as much website content as possible, web scrapers will often send a massive number of hits to your site in a short period of time while scraping and stealing your content. This can saturate your servers, causing your pages to load slower than they otherwise would, or even taking down your site entirely.

Aggressive content scraping was regularly causing website performance issues and downtime on the websites of Northland Properties, one of Canada’s most trusted hospitality businesses.

Our industry is really competitive. If people come to our website and find it too slow, they will just leave us without a reservation.

Ben Norouzizadeh, Software development team lead, Northland Properties

To add insult to injury, Google penalizes slow sites, too. So in addition to creating a poor user experience for your legitimate visitors, content scraping can also cause your website to appear lower in search results. This will, of course, drive fewer visitors to your site in the first place.

Is web scraping legal?

Is web scraping even legal? Unfortunately, there is no easy answer to this question.

Web scraping is a legal gray area with lots of ifs, buts and maybes. Legislation and precedents vary between countries, and even within one country, court decisions are often not uniform.

Nonetheless, here are some key points to consider:

- Using a scraper bot is not illegal (in and of itself). If you want to scrape your own site, go ahead!

- Some web scrapers are useful, and website administrators might indeed welcome them. For example, aggregators that scrape and publish your content to popular websites that link back to you can bring you additional qualified traffic.

- Copyright laws still apply, regardless of which technology is used to copy the content.

- Extracting personally identifiable data, such as names and email addresses, is prohibited by the European General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA), regardless of the technology being used.

Protect Against Content Theft with DataDome

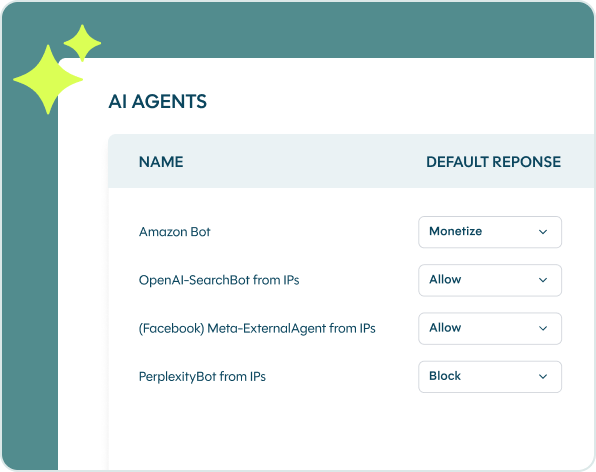

DataDome is easy to install on any web architecture, and it runs on autopilot. You will receive a real-time notification whenever the solution is detecting scraping attacks on your site, but you don’t need to do anything. Once you have set up an allow list of trusted partner bots, DataDome will take care of all unwanted traffic, so you no longer have to worry about your online content being stolen.

Start a free trial or book a live demo today.

A year or so ago, we met some people who happen to be in the web scraping business, and developed a friendly relationship with them. One day, over a beer, our scraper-operating friends told us that if we really wanted to protect our websites, we should go with DataDome. The DataDome bot protection was making their lives very hard!

Michael Romer, Head of Product and IT, LV digital (Traktorpool)